Scientific Background

Our current research addresses the effect of age and neurodegeneration on cognition and system-level brain changes (as revealed by brain imaging), with a focus on cognitive reserve, using large, longitudinal datasets (see first topic below). Our past research has focused more on episodic memory, which is one of the first complaints as we grow older, and can be particularly debilitating with ageing and in dementia.

- Cognitive Reserve

- Effects of Age on Cognition and the Brain

- Effects of Neurodegeneration on Cognition and the Brain

- Effects of Prior Knowledge on New Memories: The SLIMM model

- Fast Mapping in Adults

- Predictive, Interactive Multiple Memory Signals (PIMMS): a conceptual framework

- Role of Medial Temporal Lobes in Memory and Perception

- Relationship between Recognition Memory and Repetition Priming

- Comparisons of Direct (Intentional) and Indirect (Incidental) Retrieval

- Neural correlates of Recollection and Familiarity in Recognition Memory

- Role of Attention and Awareness in Repetition Priming

- Role of Stimulus-Response Associations in Priming

- Neural Mechanisms of Repetition Suppression and its Use to study Perception

- Using Repetition Suppression to study Neural Bases of Face Processing

- The Philosophy of Functional Neuroimaging (Neophrenology?)

- Open Science

- Methodological Developments in MRI, fMRI, EEG/MEG

- Short-term Memory for Serial Order

Note also that the opinions stated here are, of course, biased (and should not necessarily be associated with some of the collaborators named!)

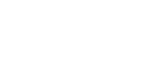

Cognitive Reserve

The term ‘cognitive reserve’ broadly refers to better-than-expected cognitive abilities in old age or following brain damage, presumed to reflect environmental/lifestyle factors earlier in life. However, the term is used loosely in the literature, so our working definition (illustrated with simulations) is defined in (Henson, 2026). We are currently testing this model using longitudinal, multimodal imaging data from several international cohorts, including Cam-CAN (see below). We are also interested in lifestyle contributions to Cognitive Reserve, with the hypothesis that these may need to be practiced for decades and may take decades to emerge, which is why we have focused on the effect mid-life activities on late-life cognition/brain (e.g., Chan et al., 2018). However, we are yet to find the brain correlates in late-life of such mid-life activities (Raykov et al., 2023).

We are also interested in the possibility that Cognitive Reserve reflects functional compensation during performance of cognitive tasks, though until now have failed to find convincing evidence that the age-related hyper-activation in frontal (Morcom et al., 2018) or lateral (Knights et al., 2021) cortices is compensatory (rather than just inefficiency). Only in a problem-solving matrix task have we found activation that meets criteria for being compensatory (Knights et al., 2024), though this was in a posterior visual region, and may be specific to this particular task. In future work, we will examine task-general patterns of activation/connectivity that may be compensatory.

In larger, multi-cohort studies (such as the LifeBrain consortium; Walhovd et al., 2018), we have shown for example that, while more years in education does result in higher baseline memory and brain volume in late-life, it does not moderate the subsequent rate of decline of either (Fjell et al., 2025; see also Nyberg et al., 2021). This suggests that education may not in fact boost cognitive reserve, and instead it may be that genetic or early-life factors lead to better memory and brain health, which in turn lead to more years in education, i.e., education may be a consequence rather than cause of cognitive ability. Another potential contributor to cognitive reserve - socio-economic status - may likewise play a role in early-life rather than adulthood (Walhoud et al., 2022). Early-life factors also seem important for the "Brain Age Gap" - the difference between one's chronological age and the age predicted from a brain scan - which we showed does not relate to subsequent longitudinal change (Vidal-Pineiro et al., 2021).

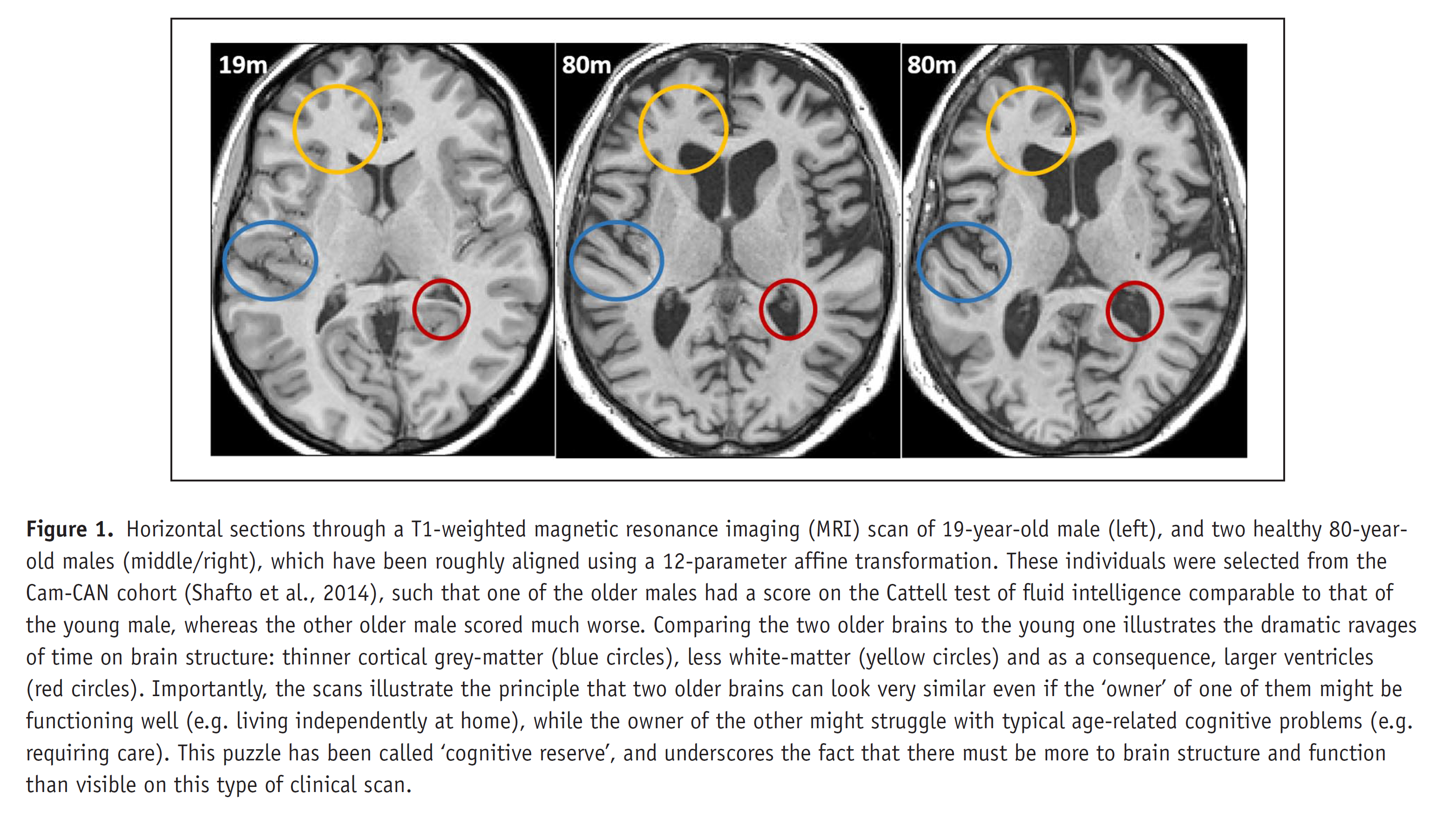

Effects of Age on Cognition and the Brain: CamCAN studies

Much of our work on ageing is conducted as part of the Cambridge Centre for Ageing and Neuroscience (CamCAN): http://www.cam-can.org/. CamCAN includes MRI, MEG, cognitive and demographic data on approximately 750 adults from 18-88 years of age, some of which have been followed for over 12 years. In 2016, we made the baseline data available on request (from above website), which has resulted in hundreds of publications (see Henson & CamCAN, 2026 for review).

In terms of memory, and inspired by our prior work on the CID-R paradigm, we included a paradigm designed to estimate associative memory (recollection), item memory (familiarity) and perceptual priming on a trial-by-trial basis. Using structural equation modelling, we found that age affected associative and item memory, but not priming, independent of fluid intelligence and education (Henson et al., 2016). Furthermore, we found that gray-matter volume in hippocampus, parahippocampus and fusiform cortex, together with white-matter integrity of the major fibres connecting these regions, made independent contributions to memory performance, supporting general claims that memories arise from multiple interacting regions (see PIMMS). Data from the same task were used to show that memory for negative stimuli (but not for positive or neutral stimuli) is more sensitive to sub-clinical levels of depression than a standard neuropsychological test (Schweizer et al., 2017), while fMRI data on the encoding phase of the task were used to question the idea of age-related frontal hyper-activation being compensatory (see Cognitive Reserve).

Together with Kamen Tsvetanov, we have investigated the important role of vascular health in cognitive and brain ageing. Following initial evidence that white-matter hyperintensities relate to pulse pressure (difference between systolic and diastolic; Fuhrmann et al., 2019), we showed that pulse pressure relates to the cognitive discrepancy score (difference between fluid and crystallised intelligence, as a proxy for cognitive decline; King et al., 2023) and this is mediated by white-matter (particularly the peak-width of skeletonised diffusivity; King et al., 2024). We have also related resting-state BOLD signal variability to cardiovascular and neurovascular factors (Tsvetanov et al., 2020) and examined the role of blood flow in cognitive ability (Wu et al., 2022). Age-related vascular changes also have important implications for interpreting the fMRI BOLD signal (see Tsvetanov et al, 2020, for review). Interpreting age effects on fMRI data therefore requires either attempts to correct the data for vascular confounds, both for activations (Tsvetanov et al., 2015) and connectivity (Geerligs et al., 2017), or explicit modelling of the neural versus vascular componens of the BOLD evoked response (Henson et al., 2024) and effective connectivity (Tsvetanov et al., 2016).We have also investigated the "positivity bias" in ageing: the suggestion that older people are biased towards positive-valenced information. We did find a faster age-related decreases in memory for negative than positive images in Henson et al. (2016) above, though more recently begun to test the hypothesis that such positivity biases are an indication of cognitive decline, rather than socioemotional factors (Wolpe et al., 2025).

Other neuroscientific findings from CamCAN related to ageing include: 1) dissociation of executive functions according to grey- and white-matter in frontal cortex (Kievit et al, 2014), 2) evidence against the effect of the APOE genetic polymorphism on brain or cognition being moderated by age (in a Registered Report, Henson et al., 2020), 3) dissociation of age effects on the latency of evoked responses from different sensory modalities as measured with MEG (Price et al., 2017), 4) age-related differentiation of brain and cognition, particularly concerning white-matter and memory (de Mooij et al., 2018), 5) differential rates of grey-matter change in different brain regions (Nyberg et al. 2023).

Other methodological developments include: 1) testing "watershed" models of cognition, in which genetic variability drives brain variability which in turn drives cognitive variability (Kievit et al, 2016), 2) comparing different MRI measures of white-matter using factor analysis (Raykov et al., 2025), 3) demonstrating that the effects of age on functional connectivity depend on the state in which that connectivity is measured, questioning the sufficiency of purely resting-state approaches (Geerligs et al., 2015), 4) contributing to brain growth charts (Bethelehem et al., 2022), 5) modelling group-level brain connectivity using Bayesian exponential random graph models (Lehman et al., 2021).

In addition to CamCAN, we have also run smaller-scale experiments. For example, we have explored the effect of age on fast mapping (Greve et al., 2014) and multinomial models of source memory (Cooper et al, 2017). Together with Jon Simons and Ali Trelle, we argued that age-related memory deficits reflect a combination of representational and retrieval problems, using evidence from both behaviour (Trelle et al, 2017) and imaging (Trelle et al, 2019). This extends our prior work with Audrey Duarte, examining how recollection (Duarte et al, 2008) and familiarity (Duarte et al, 2010), and their neural correlates, change with age.

Effects of Neurodegeneration on Cognition and the Brain

We are involved in several national (eg DFP and NTAD/SHINE) and international (eg MAGIC, Biofind) consortia investigating the potential of MEG for early detection of dementia, in particular, Alzheimer’s Disease (Maestu et al, 2015; Hughes et al, 2019). MEG may reveal functional abnormalities before structural atrophy is apparent from MRI. For example, using the BioFIND dataset of resting-state MEG (Vaghari et al, 2022a), which we made available on DPUK, we used stacked machine learning to show that MEG provides additional information beyond MRI in classifying MCI patients versus controls (Vaghari et al, 2022b), and in predicting those MCI patients who progress to probable AD (Gaubert et al., 2025). We also showed that graph-theoretic properties of MEG connectivity (using multivariate autoregressive modelling) can distinguish different dementia pathologies (e.g., typical AD versus bvFTD) in terms of the frequency and scalp location (Sami et al, 2018).

Together with James Rowe and the NTAD/SHINE study (Lanskey et al., 2022), we are also exploring the utility of neurophysiological models (based on the dynamic causal modelling framework) to estimate biological parameters from MEG data. This includes possible cholinergic effects on gain of supra-pyramidal cells (Lanskey et al, 2025a), relative roles of AMPA versus NMDA receptors (Jafarian et al., 2025; Lanskey et al, 2025b), as well as methodological developments (Jafarian et al., 2024).

In addition to MEG, we have explored the potential clinical utility of immersive virtual reality (iVR) to detect early navigational problems in dementia, in collaboration with Dennis Chan. We found that measures of path integration related to biomarker-based risk in patients with MCI (Howett et al, 2019) and in asymptomatic middle-aged people at risk of dementia (Newton et al., 2024). We are also currently exploring a subset of the CamCAN cohort, who had not approached their GPs to complain of dementia-related problems, but who scored below conventional cut-offs for dementia on neuropsychological tests, as well as being part of large-scale consortia that examine, for example, sex differences in dementia (Ravndal et al., 2025) and possible genetic risk factors for memory decline (Vidal-Pineiro et al., 2025; Gorbach et al., 2020).

Finally, we have been exploring, at least in healthy people at first, various interventions that might ultimately help people with memory problems. For example, a novel experience shortly after learning has been claimed to improve memory, by stimulating release of plasticity-related proteins. However, in a Registered Report, we found evidence against this claim (Raza et al., 2025). Instead, we found that it is resting shortly after, or even before, the learning phase that improves memory, and are currently investigating a range of explanations. Another manipulation that has been proposed to help associative memory (e.g, associating a video with a sound) is to synchronously modulate the amplitude of the visual and audio signal at Theta frequency (eg 4Hz). Again however, our Registered Report provided evidence against this claim (Serin et al., 2024).

Effects of Prior Knowledge on New Memories

New memories are not stored on a tabula rasa: what aspects of a new experience we encode depends on what we already know. Indeed, information congruent with what we already know is generally remembered better. However, information that is contrary to what we know can also be remembered well. We propose that both effects reflect the important role of “schemas” in perception and memory encoding. Schemas are abstract representations of organised knowledge, which make predictions about what we will perceive (e.g, what we expect to encounter in a restaurant). According to our SLIMM model (van Kesteren et al, 2012), schemas are patterns of cortical activity that modulate processing of incoming sensory information. Information that is congruent with a schema is encoded in the cortex, possibly via a gating mechanism in ventral medial prefrontal cortex (vmPFC), whereas information incongruent with a schema is captured as an episodic “snapshot” by a system based in the medial temporal lobes (MTL), particularly the hippocampus. Information that is unrelated to a schema benefits little from either system.

The SLIMM model therefore predicts a U-shape function of memory against schema congruency; a pattern we have recently found after training people with artificial schema (Greve et al., 2019). Furthermore, SLIMM predicts that the two ends of this U-shape should be functionally dissociable, given that they are based on different brain systems. We also found behavioural support for this, with the advantage of congruent but not incongruent information extending to post-encoding processes, and the advantage of incongruent but not congruent information extending to incidental contextual information. We have since replicated this U-shape using more realistic schema (e.g, objects you might find in a kitchen), implemented with immersive virtual reality, and a continuous subjective measure of expectancy (Quent et al., 2022), though failed to dissociate two ends of the U-shape according to recollection versus familiarity.

Fast Mapping in Adults

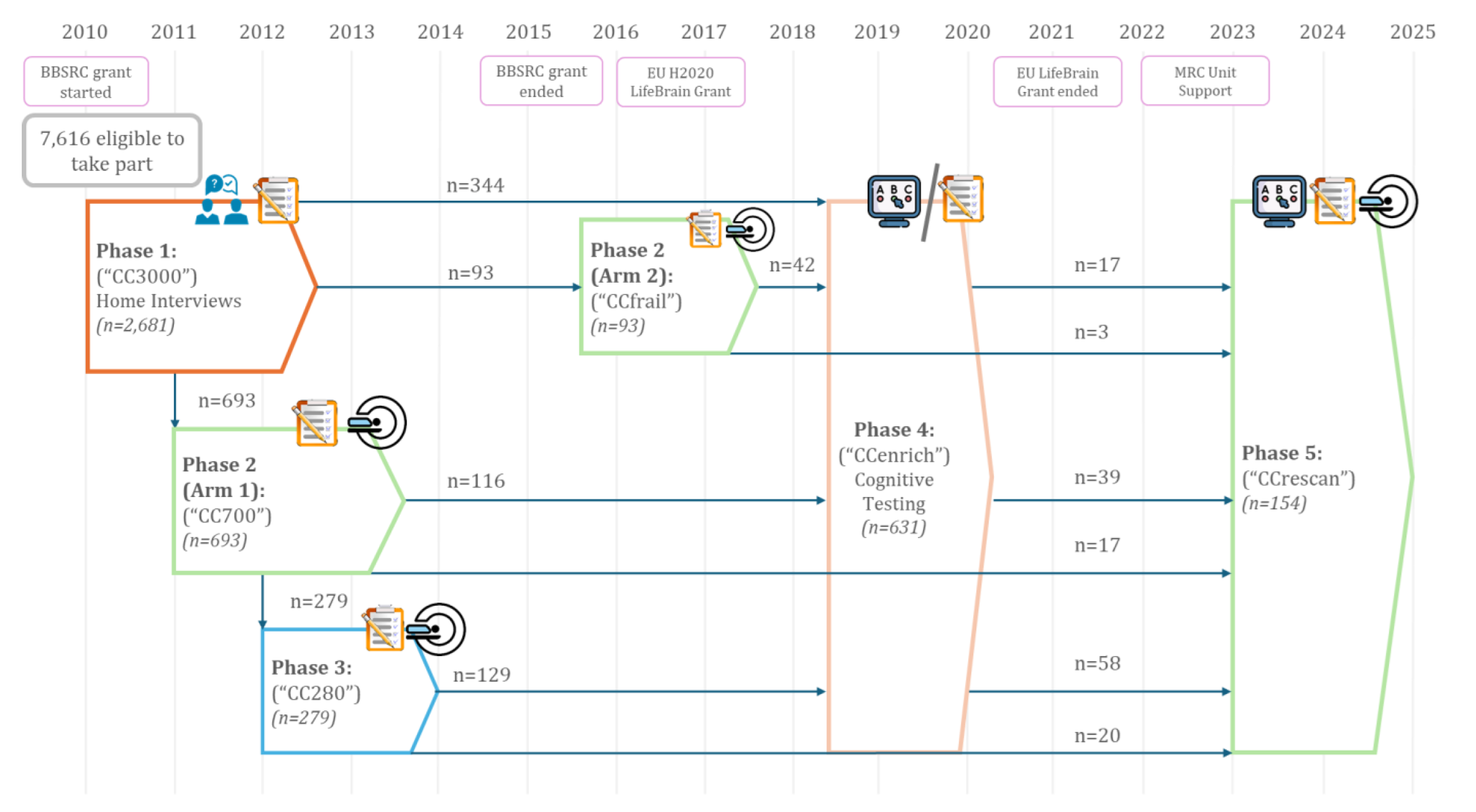

It has been claimed that individuals with amnesia following hippocampal damage can explicitly learn new object-name associations within a single session as well as controls, following a “fast mapping” (FM) procedure. This procedure, inspired by claims in the developmental literature about how infants acquire vocabulary, involved participants inferring the new name from a question that related the new object to a familiar object. This claim attracted our interest not only for its rehabilitative potential, but also because of the theoretical claims of rapid cortical learning, independent of MTL (consistent with our SLIMM model above). Unfortunately, we are still yet to find compelling evidence for FM in our own work. For example, we failed to find any evidence that the FM procedure helps memory in healthy, older individuals, despite MRI-confirmed hippocampal shrinkage (Greve et al., 2014). Instead, FM performance, like memory performance under standard explicit encoding (EE), correlated with hippocampal volume. We have also failed to find any FM advantage in three individuals with confirmed hippocampal damage, nor been able to dissect the cognitive components essential for FM (Cooper et al, 2019a), and nor have we found evidence of an FM advantage in implicit tests of memory (Gurunandan et al., 2023). This led us to question the adult FM paradigm in a recent review (Cooper et al, 2019b), upon which a number of experts commented, to which we wrote a final reply (Cooper et al, 2019c). We hypothesize that fast mapping into cortex requires stronger schema than provided by the above FM procedure.

There have also been claims that individuals with hippocampal damage can benefit from “unitization”, whereby two new items are bound into a single unit during encoding. For example, memory for the pairing of the words “cloud” and “lawn” benefits when encoded with a definition (“a garden used for sky-gazing”) compared to completion of a sentence (“the cloud could be seen from the lawn”). However, we found no support for our hypothesis that unitization is a special case of schematization, in that we found no evidence of generalisation from one pairing (cloud-lawn) to a related one (moon-lawn), as would be predicted from an abstract structure like a schema (Tibon et al, 2018). Thus unitization seems to be a different type of hippocampal-independent learning, which warrants further investigation, particularly for rehabilitative potential for memory problems following brain injury and ageing.

Predictive Interactive Multiple Memory Signals (PIMMS): a general framework

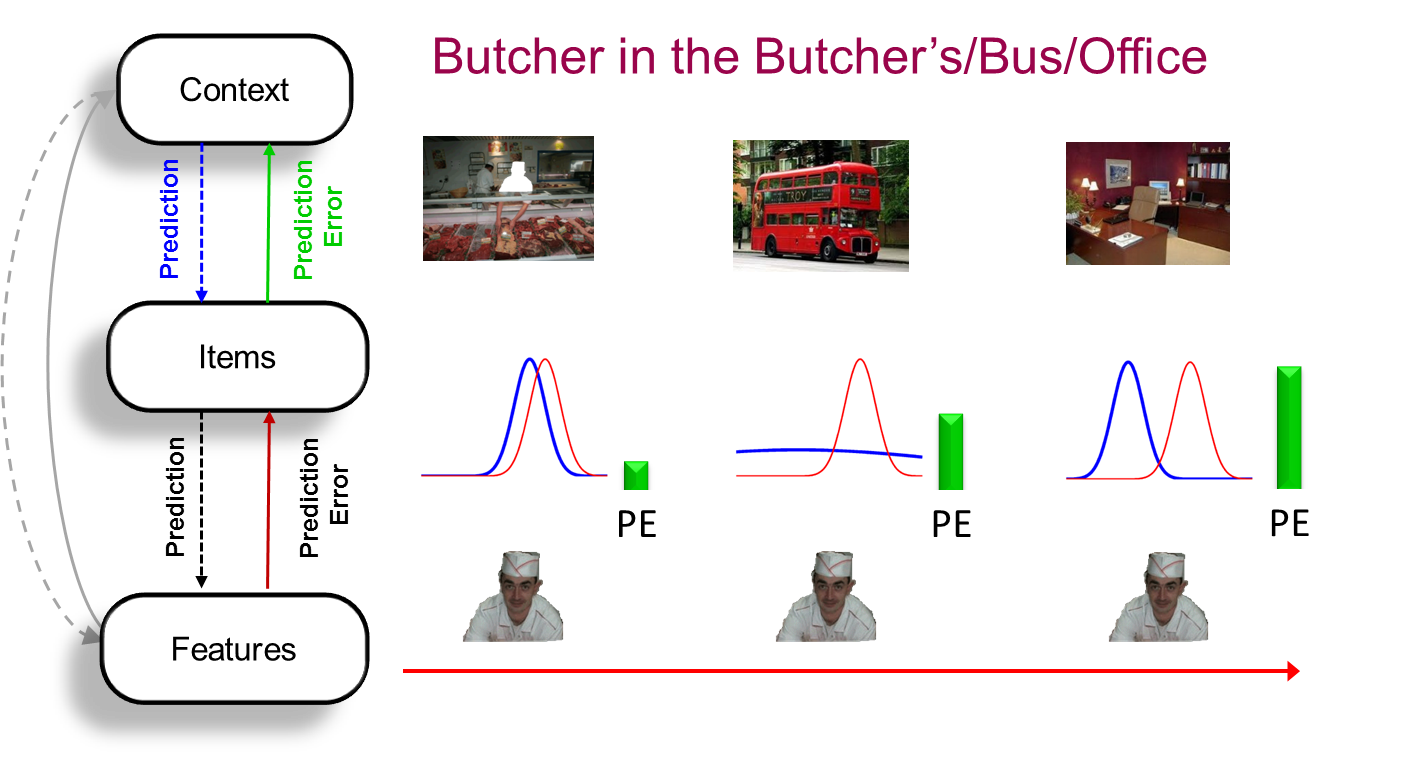

The key idea behind PIMMS is that the brain learns in order to minimise "prediction error" (PE): the difference between what we expect to perceive and actually perceive. This reflects the fact that the evolutionary value of memory is not to reminisce about the past, but to predict the present (and simulate the future). The predictions can come from prior knowledge, i.e., schemas (see SLIMM model), or from other recent experience (e.g, priming). Memory and perception are intimately related, in that perception reflects the dynamical (on-line) reduction in PE over a few hundred milliseconds after a stimulus is presented, whereas memory reflects the slower (off-line) synaptic changes that reduce that PE when that stimulus is encountered again. Signals relating to memory arise from this interaction of predictions and prediction errors in multiple brain regions, depending on the information involved. For example, the brain regions involved in representing our spatial environment are constantly predicting activity in brain regions representing the objects that we know (i.e., what objects will be present in that environment), and errors in those predictions (unexpected objects) are fed back to update our predictions (memories) for those environments. The level at which this PE occurs determines whether repetition of an object leads to recollection, familiarity or priming.

PIMMS was first outlined briefly in Henson & Gagnepain (2010). We then spent many years refining a behavioural paradigm to test its predictions while controlling for many other factors that affect memory; specifically to test how well people can remember an association between a scene and an object. PIMMS predicts that their memory will be proportional to the divergence between the prior (the probability of that object given the scene) and the evidence (the probability of that object given sensory input). Sure enough, in three different scenarios where we manipulated the prior and/or evidence, the PIMMS predictions were confirmed by Greve et al. (2017). This perspective on prediction error distinguishes surprise from novelty (Quent et al., 2021).

PE is often invoked in the context of reward, and it has been claimed that memory is a function of signed PE, i.e., improved by positive PE (unpredicted reward) but impaired by negative PE (absence of predicted reward). PIMMS however assumes that memory relates to unsigned PE, i.e., greater surprise will always improve episodic memory encoding, and that this occurs in everyday life, where there is often not an explicit reward (though one could always argue for implicit reward, eg motivational). While we were able to replicate previous claims that memory relates to signed PE in a specific "variable choice" paradigm, we also simulated alternative models that predicted the same pattern without appealing to signed PE (Gurunandan et al., 2026). We suspect that, in the context of episodic/declarative memory (rather than conditioning/reward learning), unsigned PE leads to increased attention (or learning rate, a la Pearce and Hall), rather than signed PE directly driving what is learned (a la Rescorla-Wagner). However, it is difficult to separate PE from attention, as simple modelling shows in the context of the role of PE in priming (Kaula & Henson, 2021).

We are also interested in the role of PE in segmenting continuous experience, as reflected for example in hippocampal activity at event boundaries in movies (Ben-Yakov et al, 2018). Preliminary evidence using stop-motion films found little evidence of surprise on memory for surrounding information, but a suggestion (to be replicated) that surprise can introduce new event boundaries (Ben-Yakov et al, 2021).

Role of MTL in perception and memory

The Medial Temporal Lobes (MTL) have long been known to be important for memory, and more recently, in perception. After several years, we established a panel of rare individuals with focal MTL damage centred on the hippocampus (Henson et al, 2016). This paper showed effects of their hippocampal lesions (from T1-weighted MRI volumetry) on structural connectivity (from Diffusion-weighted MRI) and functional connectivity (from BOLD-weighted, resting-state MRI), demonstrating both connectional and connectomic diaschisis. These individuals have undergone a number of cognitive tests. For example, contrary to prior claims, they showed no evidence of Fast Mapping, nor spared item memory, at least when using multinomial processing trees to overcome the problems with standard analyses in this field (Cooper et al, 2017). Also contrary to our own claims (see stimulus-response learning), we found no evidence that these individuals showed impaired stimulus-response bindings in a priming task (Henson et al, 2017). This is remarkable because it challenges conventional wisdom that brain regions outside the hippocampus cannot support storage of a large number of unique, arbitrary and abstract S-R mappings that are learned from a single trial.

Early functional fMRI studies of healthy individuals did not have the spatial or temporal resolution to distinguish the functions of different sub-regions within the MTL, such as hippocampus versus perirhinal cortex (Henson, 2005), at least not at the level of stringency I have proposed necessary for claiming functional dissociations (Henson, 2006a). With Bernhard Staresina however, we were able to analyse intracranial ERP data from patients undergoing surgery, which provide sufficient temporal and spatial resolution to reveal dissociable temporal stages within hippocampus and perirhinal cortex associated with source and item memory respectively (Staresina et al, 2013a). This is consistent with neuropsychological work with Chris Butler, in which we found a double dissociation between recollection (source memory) and familiarity (item memory) in a group of patients with varying degrees of MTL damage, though continuous brain-behaviour relationships (rather than binary thresholds for damage) also indicated an important role for parahippocampal cortex, beyond the perirhinal cortex, in familiarity (Argyropoulos et al., 2022).

In addition to this evidence for functional segregation of hippocampus and perirhinal/parahippocampal cortex, Bernhard Staresina and I have also investigated how these regions interact to support successful memory retrieval, i.e, functional integration. Our iEEG data showed functional connectivity in alpha and gamma bands during recollection (Staresina et al, 2012a). Using Dynamic Causal Modelling (DCM) on fMRI data, we found evidence for changes in directional (effective) connectivity between perirhinal cortex, hippocampus and parahippocampal cortex during cued recall as a function of whether the retrieval cue and target were objects or scenes (Staresina et al, 2013b). Using pattern analysis (RSA), we also found increased similarity between encoding and retrieval patterns in parahippocampal cortex associated with recollection, and that this encoding-retrieval similarity (RSA) was correlated with activity levels in hippocampus, implicating hippocampus in driving pattern reinstatement in other MTL regions (Staresina et al, 2012b).

Continuing ideas developed by Morgan Barense, Kim Graham, Lisa Saksida and Tim Bussey, we have also argued for a role of the MTL in perception as well as memory. In particular, we found evidence from patients and fMRI that perirhinal cortex is critical for perception of visual objects that share features with other objects (Barense et al, 2012; Barense et al, 2011; Barense et al, 2010; Lee et al., 2006). What seems key is the nature of the neural representations used across tasks, whether those be perceptual, short-term or long-term memory tasks; an idea incorporated into PIMMS.

Relationship between Recognition Memory and Repetition Priming

In order to explore the relationship between explicit and implicit memory, we concentrated on tests of visual recognition memory and repetition priming, believed to tax declarative and nondeclarative memory systems respectively, and which can be closely matched in their experimental procedures and scoring. Both tests have the benefit of a long pedigree of laboratory study, and their procedural simplicity facilitates their adaptation to the scanning environment.

While some mnemonic processes, such as the fluency of perceptual or conceptual processing of stimuli, are likely to contribute to both tests, a key question is whether more than one such memory process is needed to explain functional (behavioural) differences in such tasks. Together with Chris Berry and David Shanks, we developed a computational model (an extension of signal-detection theory) that assumes only a single memory signal (Berry et al, 2008b). By adding the important assumption of independent task-specific (non-mnemonic) noise, this model does surprisingly well at reproducing many "dissociations" between recognition memory and repetition priming that have been reported, such as the effect of attentional manipulations at study (Berry et al, 2006a; Berry et al, 2010) and impairments in amnesia (Berry et al, 2008a). A property key to explaining these dissociations is a greater measurement noise in typical priming tasks, which makes them less sensitive than recognition memory tasks (indeed, we have consistently failed to replicate prior claims that such an indirect priming task can be more sensitive than a direct recognition task; Berry et al, 2006b). We subsequently generalised the framework in order to compare a range of single- and dual-process models (with varying degrees of dependence between implicit and explicit memory processes; Berry et al, 2012). Using a "CID-R" paradigm, these models can be fit (by maximum likelihood) to trial-by-trial measures of recognition memory (d', Remember/Know judgments; Confidence judgments), priming (d' or RTs) and fluency (RTs). Information criteria continue to support the single-process model over other variants in this model family, though other paradigms and models need to be explored. Moreover, structural equation modelling on a large number of CamCAN participants in a modified version of the CID-R paradigm provided more compelling evidence of more than one underlying memory factor (Henson et al., 2016).

We have also explored the relationship between priming and recognition using a paradigm in which words in a recognition memory test are preceded by a masked stimulus that is either a related or unrelated word. Though there is no independent measure of priming, people are more likely to call primed test items "studied", regardless of whether they were in fact studied (though only when unaware of the prime, or at least unaware of its relevance). Using ERPs and repetition primes, we found at least four different spatiotemporal effects associated with: recollection, familiarity, long-term (supraliminal) repetition priming and immediate (subliminal) repetition priming (Woollams et al, 2008). Jason Taylor and I went on to examine the effect of using semantically-related rather than identity primes. Surprisingly, we found situations in which masked semantic primes seemed to increase recollection rather than familiarity, which is difficult to explain with any current theory (though the precise boundary conditions for this effect remain to be established; Taylor & Henson, 2012). This "unconscious priming of recollection" correlates with increased activity in parietal regions previously associated with recollection, suggesting that it is not simply an artifact of using a binary Remember-Know procedure (Taylor et al., 2013).

Priming and recognition can also be studied with a modified word-stem completion paradigm developed by Alan Richardson-Klavhen, in which people intentionally try to complete word-stems with a studied item, but if they cannot, they complete with the first word that comes to mind, and then indicate whether they now recognise the completion from the study phase (those trials where a studied completion is not recognised provide a measure of implicit priming, at least in comparison with unstudied, baseline trials). In an fMRI study together with Alan, Bjoern Schott and Emrah Duzel, we found differences between recognition and priming at both encoding (Schott et al, 2006) and retrieval (Schott et al, 2005). For example, at retrieval, fusiform regions showed reduced activity relative to completion of unstudied items (repetition suppression) for primed trials, regardless of whether the completion was recognised, whereas parietal regions showed increased activity only for recognised completions (while hippocampus showed reduced activity for primed trials, but no difference for recognised trials, relative to unstudied trials).

In summary, whereas computational modelling questions some of the behavioural dissociations used to distinguish explicit and implicit memory, I believe that neuroimaging data, like in the fMRI and ERP studies above and in sections below, appear more consistent with multiple memory signals. In fact, I think there are good (computational) reasons for distinguishing recollection from familiarity/priming, though fewer reasons to suppose that familiarity and priming have distinct causes; rather familiarity and priming may both reflect fluency at one or more stages of perceptual/lexical/semantic processing, differing in only whether or not that fluency is attributed to the past. Importantly, repetition of a stimulus may increase fluency at multiple of these processing stages, leading to dissociations between the neural correlates of different types of fluency (Taylor & Henson, 2012): Thus, there may be multiple brain signals that may map to the same psychological process. Moreover, the attribution of fluency to the past (i.e, leading to a feeling of familiarity) is itself likely to be a complex process, with one important factor being whether the fluency is expected or unexpected (related to "predictive theories" of memory like PIMMS).

Comparisons of Direct (Intentional) and Indirect (Incidental) Retrieval

Another way to separate implicit and explicit memory is to compare retrieval tests that are matched in every way except whether or not the instructions refer to a prior episode (Schacter's "retrieval intentionality" criterion). One example is the word-stem completion paradigm, where word-stems are completed either with the first word that comes to mind ("indirect" test) or with a specific word that was previously studied ("direct" test). Though direct and indirect tests are not necessarily pure measures of explicit and implicit memory, one can use further manipulations to test for contamination of, for example, an indirect test by explicit memory (though a failure to find such contamination could simply reflect the lesser sensitivity of indirect tests; see above Section). With Christiane Thiel, we showed that deeper (more semantic-based) encoding of words, which is known to increase explicit memory, had no effect on an indirect word-stem completion task (i.e, suggesting that priming was not contaminated by explicit memory). At the same time, administration of the drugs lorezapam and scopolamine (versus placebo) reduced both behavioural priming and repetition-related reductions in cortical regions associated with this task (Thiel et al, 2001). These data reinforce those of (Schott et al, 2005), in suggesting that these cortical repetition suppression effects reflect implicit memory, and moreover suggest that both GABAergic and cholinergic systems are important for the plasticity associated with this type of memory.

However, an fMRI study with Cristina Ramponi (Ramponi et al, 2011) suggested that the relationship between intention and awareness, and between behavioural and brain data, is more complex. For example, contamination of an indirect test by explicit memory could be intentional (voluntary), if a participant uses a strategy of recalling from a prior study episode, or incidental (involuntary), if a participant recognises a completion, after it has come to mind, as having been studied previously - which, in the latter case, may not affect behavioural measures of implicit memory, but might confound concurrent neuroimaging correlates. Using word-pairs rather than word-stems, we showed that manipulations of both 1) the depth of encoding and 2) emotionality of the word-pairs had significant effects on the direct task of cued-recall, but neither had a detectable effect on the indirect task of free-association, again suggesting that the latter provides a pure behavioural measure of (conceptual) priming. In the fMRI data, we found greater hippocampal activity for studied relative to unstudied completions in the direct than indirect task, consistent with hippocampal involvement in intentional or explicit retrieval. However, we also found study-related activity in parietal regions, previously associated with explicit retrieval, in both tests (and that this activity was greater following deeper encoding). This suggests that, even though the behavioural data from the indirect task may not be contaminated by explicit memory, the neuroimaging data were "contaminated" by conscious memory for the study episode (that arose incidentally to, and perhaps after, the behavioural completion). This potential dissociation between intentional and incidental explicit memory clearly deserves further investigation: For example, in relation to the Schott et al (2005) study, where effects of retrieval volition, when comparing direct vs indirect tasks, were found mainly in prefrontal cortex; and the study of Henson et al. (2002), where fusiform repetition suppression for familiar faces was found during an indirect task (fame judgment), but not during a direct task (recognition memory). This difficulty with using (sluggish) techniques like fMRI may also explain our failures to even find differences between retrieval from semantic versus episodic memory (Tibon et al., 2026).

In collaboration with Emily Holmes, we have also explored differences between intentional (voluntary) and incidental (involuntary) retrieval in relation to the intrusive memories of traumatic events that are symptomatic of many emotional disorders like PTSD. We replicated Emily's prior work, namely that simply by playing a visual computer game (Tetris) shortly after watching distressing films, the incidence of involuntary intrusions can be reduced, without affecting voluntary retrieval of those films (which is important, e.g, for eye-witness testimony), even when doing our best to control for other retrieval factors (Lau et al, 2019). The game-play is assumed to interfere with the consolidation of imagery-based memory traces that underlie memory intrusions, and which are hypothesised to be independent of other declarative traces. We subsequently developed a paradigm to investigate intrusions in the laboratory, which showed the same selective effects of Tetris (Lau et al, 2021), as well as another paradigm to investigate intrusions in fMRI (Visser et al., 2022).

Neural correlates of Recollection and Familiarity in Recognition Memory

In collaboration with Mick Rugg, we used several experimental manipulations to attempt to separate the fMRI correlates of familiarity and recollection, including Remember/Know judgments (Henson et al, 1999a), confidence judgments (Henson et al, 2000), deep/shallow study tasks (Henson et al, 2005), and modified source memory tasks (Rugg et al, 2002), including memory for emotional context (Smith et al, 2004; Smith et al, 2005; Maratos et al, 2001). In general, these experiments have supported the recollection/familiarity distinction, though the data are far from conclusive. Important findings include the association of posterior cingulate and inferior parietal cortex with recollection (for review, see Rugg et al, 2002), and the association of anterior medial temporal cortex (outside the hippocampus) with familiarity (for meta-analysis, see Henson et al, 2003, and for review, see Henson, 2015)).

A further common finding has been the activation of prefrontal cortex during recognition memory. After considering a large number of similar findings in our laboratories, Tim Shallice, Paul Fletcher and I hypothesised that these activations reflect a number of distinct control processes that work to optimise memory performance; for example, in monitoring retrieved information in relation to the task goals (Henson et al, 1999b; Henson et al, 2002; for review, see Fletcher & Henson, 2002). Indeed, even a manipulation as simple as the ratio of old to new items in a recognition memory test dissociated prefrontal activations, which we attributed to control processes, from parietal activations, which we attributed to "true" explicit memory retrieval (Herron et al, 2004). This reinforced the importance of subtle procedural changes, such as what participants perceive as the targets in a memory test (issues that are well-known in the ERP literature, but often over-looked in behavioural studies). Another type of retrieval control process we have investigated is "retrieval orientation" – a state in which one is attempting to retrieve a specific type of information – using both EEG (Hornberger et al, 2006a) and fMRI (Schott et al, 2005). More recently however, we do find regions in anterior prefrontal cortex (BA 10) that seem to correlate specifically with contextual retrieval, and differentially according to the nature of that context (Simons et al, 2008; Duarte et al, 2009; Gilbert et al, 2010). Together with Jon Simons, we combined MEG and fMRI to explore the dynamics of these prefrontal regions in recollection (Bergstrom et al, 2013).

Note that the recognition memory paradigm has limitations. Firstly, the paradigm may only tax a subset of memory retrieval processes. The provision of a strong retrieval cue (a so-called "copy cue") minimises the need for participants to engage in elaborative search strategies (as is required, for example, when freely recalling studied items). Unfortunately, recall paradigms are more difficult to adapt to the scanning environment, since they normally require spoken responses, which can cause movement-related artefacts in fMRI and EEG/MEG data. By developing methods to minimise such artefacts in fMRI (Henson & Josephs, 2002), we successfully investigated proactive interference in a cued recall paradigm (Henson et al, 2002), the results of which further supported the role of prefrontal cortex in monitoring memory retrieval.

The above experiments concentrated on the test phase of the recognition memory task. In collaboration with Leun Otten, we conducted fMRI experiments focused on the study (encoding) phase, investigating neural activity that predicts subsequent memory. We showed that the hippocampus appears to predict subsequent memory regardless of the study task (Otten et al, 2001). Other regions, like anterior left inferior prefrontal cortex, appear to predict subsequent memory only during tasks that require semantic elaboration (see Rugg et al, 2002, for review). We were also able to distinguish subsequent memory effects that occur on an item-by-item level from those that reflect longer-lasting "states" (e.g, periods of sustained attention; Otten et al, 2002).

With Audrey Duarte, we investigated how the neural correlates of recollection (Duarte et al, 2008) and familiarity (Duarte et al, 2010) change with age. We also contrasted recollection and familiarity for different types of stimuli (e.g, faces vs scenes), given increasing evidence from patients of differential involvement of MTL structures in memory for spatial vs nonspatial stimuli (Taylor et al, 2007; Duarte et al, 2011). With Roni Tibon, we have used cued-recall to further investigate the role of inferior parietal cortex, particularly angular gyrus, in both encoding and retrieval of vivid, multimodal memories (Tibon et al, 2019).

Role of Attention and Awareness in Repetition Priming

Some have argued that priming effects can occur in the absence of attention, or are not affected by attentional manipulations. I believe such claims are either questionable, or in the latter case, can be explained by the lower sensitivity of typical priming tasks (Berry et al, 2006a; Berry et al, 2006b; Berry et al., 2010). Like others, I believe that spatial and temporal attention is necessary for priming effects, and in three fMRI experiments, we failed to find any evidence of BOLD repetition suppression in ventral stream regions for faces, houses or objects that appeared in the ignored hemifield, whether as primes or probes (Eger et al, 2004; Henson & Mouchlianitis, 2007; Thoma & Henson, 2011).

However, one can attend to a location in space (and time) but still not be aware of a stimulus, such as when it is presented briefly between a forward and backward mask (ie, subliminal). Together with Sid Kouider, we used such sandwich masking of faces and showed reliable behavioural priming that was not easily explicable by measures of participants' ability to see the prime (and which could not be explained by stimulus-response learning). We also showed concurrent repetition suppression in occipital and fusiform face areas using fMRI (Kouider et al, 2009), demonstrating that modulation of activity in such ventral stream areas can occur without awareness (but with attention). This suppression even occurred across different views of faces and for both familiar and unfamiliar faces. An ERP version of this paradigm (Henson et al, 2008) revealed two subliminal repetition effects, an early one (100-150ms post-probe onset), which was sensitive to view but not familiarity (much like the fMRI data), and a later one (300-500ms post-probe onset), which was sensitive to familiarity (much like the behavioural priming). Much of the above on neural correlates of face priming is summarised in Henson (2016).

Role of Stimulus-Response (S-R) Associations in Priming

Priming is often conceptualised in terms of the facilitation of one or more computational stages associated with a given stimulus and task. In classification tasks, for example, where priming is measured in terms of faster RTs, such "component processes" might include perceptual identification of the stimulus and/or retrieval of semantic information relevant to the task (for reviews, see Henson, 2003; Henson, 2008; Henson et al., 2014). However, the response made to a stimulus in such tasks can become directly associated with that stimulus, such that the priming produced by repetition of the stimulus can potentially be caused by rapid retrieval of the response, circumventing many of the prior computations. The potential role of such "S-R learning" has long been known in behavioural research, but has become important in relation to the fMRI phenomenon of repetition suppression (RS), which has been used as a tool to localise different representations within the brain. For example, Dobbins et al (2004) argued that in many cases RS does not reflect local computations, but rather a bypassing of those regions/computations owing to S-R retrieval, based on their finding that RS in fusiform regions associated with visual object processing was abolished when S-R retrieval was disrupted (by reversing the classification task).

Given that other studies of ours had found RS in fusiform regions under conditions deliberately chosen to minimise response learning (e.g, Henson et al, 2000; Henson et al, 2003), Aidan Horner and I repeated the Dobbins et al paradigm in a series of experiments and found that S-R associations indeed dominate priming in this paradigm (Horner & Henson, 2009). In fact, we found evidence of simultaneous bindings between stimuli and multiple levels of response representation (from the specific finger press, to the "yes/no" decision, to an abstract classification). Indeed, our data suggested RT priming in such speeded classification tasks is dominated by S-R learning (though priming in other paradigms, particularly identification of degraded stimuli, would seem more difficult to explain by S-R learning). We also replicated Dobbins et al's finding that RS in prefrontal regions is sensitive to task switches believed to modulate S-R associations (Horner & Henson, 2008). Importantly however, in our data, the RS in perceptual (fusiform) regions appeared invariant to such task switches, suggesting that S-R associations cannot explain RS in all brain regions (consistent with our previous work using faces; Henson et al, 2000; Henson et al, 2003); for a more complete Multiple-Route, Multiple-Stage (MR-MS) model, see Aidan's PhD Thesis. We have since demonstrated that S-R associations are even more flexible than previously thought. Using an "optimised" changing-referent paradigm that reverses multiple levels of response representation, we found that S-R associations can code multiple stimulus (S) representations too (Horner & Henson, 2011). By combining fMRI and ERP, we also showed that the contributions of such "abstract" S-R learning were locked to response-onset, and most likely generated by prefrontal cortex (in contrast to a stimulus-locked component most likely generated by fusiform cortex; Horner & Henson, 2012). Finally, we ran our optimised changing-referent paradigm on six individuals with hippocampal damage (see above), and were surprised to find no evidence for reduced use of S-R associations (Henson et al, 2017), suggesting these multiple, one-shot, arbitrary associations are represented outside the hippocampus.

S-R learning also plays a role in other types of priming, such as negative priming and subliminal priming. Doris Eckstein and I studied the role of S-R learning in subliminal (masked) "categorical" priming of faces (e.g, "famous/nonfamous ", "male/female " classifications). Data from this task have been used to argue for unconscious semantic access, yet we showed that S-R learning was sufficient to explain all of the priming that we observed (Eckstein & Henson, 2011). Nonetheless, while this could be used to question the existence of unconscious semantic access, other paradigms (e.g, using semantically-related primes) have reported subliminal semantic priming that is more difficult to explain by S-R learning. This reinforces the general picture that S-R learning is an important factor in priming, but not the only factor, and that further work is needed to better separate these factors in many paradigms, particularly in controlling more fully for the surprisingly flexible contributions of S-R learning (see Henson et al, 2014, for review).

Neural Mechanisms of Repetition Suppression and its Use to study Perception

The most common finding in fMRI/PET studies of priming is a reduced haemodynamic response for repeated vs initial presentations of stimuli. This reduction has been termed "repetition suppression" (RS) (or in the context of immediate or sustained presentations, "fMR-adaptation"). It is often assumed that RS reflects facilitation of the processes performed by a set of neurons owing to performance of the same processes in the recent past. If so, RS can be used as a tool to map the brain regions associated with different processes (much like priming has been used behaviourally). For instance, if a brain region shows RS when a particular view of an object is repeated, but not when the same object is repeated from a different view, then it can be inferred that the processes performed by that brain region operate over view-specific rather than true "object-based" representations. Indeed, it has often been claimed, and demonstrated in at least one case, that this use of RS affords higher spatial resolution than more typical categorical subtractions of different stimulus-types.

We have applied this logic to the study of faces and also to other types of visual object. For example, we found evidence for fairly abstract representations of visual objects in fusiform cortex, based on RS that generalised across mirror-reflections (Eger et al, 2004) and even across vertically-split images (at least when attended; Thoma & Henson, 2011). We also replicated an intriguing laterality effect in fusiform representations, with greater generalisation across views in left than right fusiform (Vuilleumier et al, 2002).

Other findings however suggest some caution in such conclusions. Foremost, when we slowed down object recognition in an attempt to investigate the dynamics of this abstract RS (using fMRI and semantic primes), we only found RS in fusiform AFTER the point of identification (Eger et al, 2007). This raises concerns that even with normal object presentation, RS revealed by the sluggish fMRI response may be a consequence rather than a cause of priming. Secondly, we have found RS in some posterior "visual" regions even generalises from an object name to an object picture, where there is negligible visual overlap (Horner & Henson, 2011). Thirdly, we have recently found evidence to suggest that the RS found across views of objects (bodies or faces) in occipital brain regions may derive from changes in the effective connectivity from "higher" regions in the visual processing pathway (Ewbank et al, 2011; Ewbank et al, 2013; Ewbank & Henson, 2012).

Given these issues, and the prevalence of RS in the study of cortical representations, it is critical to relate this haemodynamic phenomenon to its assumed underlying neural cause. At least three different neural models of RS have been advanced (Henson, 2003), and in 2006, Kalanit Grill-Spector, Alex Martin and I were asked to compare and contrast our favoured models in a TICS paper (Grill-Spector et al, 2006). It is likely that all three models (the fatigue, sharpening and facilitation models) apply to some extent under different experimental conditions and in different brain regions. I have concentrated on dynamical models (which we called "facilitation models"; Henson, 2012), from considering data from human EEG and MEG in the same paradigms (Henson & Rugg, 2003). Some key assumptions are that RS can reflect a shorter duration of neural activity (Henson & Rugg, 2001), and that EEG/MEG repetition effects (at least for long-lags; Henson et al, 2004) tend to onset relatively late, ie after the initial stimulus-specific responses (such as the N/M170 evoked component that is maximal for faces; Henson et al, 2003). As noted above, one possibility is that these repetition effects (and RS) reflect re-entrant feedback from higher levels of the sensory processing hierarchy (Ewbank et al, 2013), or even from parietal (Eger et al, 2007) or prefrontal (Horner & Henson, 2012) regions, particularly if decision processes (like S-R learning) are not controlled. We used Dynamic Causal Modelling (DCM) of fMRI data during face processing to support the more general claim that repetition modulates the effective connectivity between brain regions (Lee et al., 2022).

Most recently, we have simulated multiple neural models and found that only a local scaling of neural activity is consistent with a range of univariate and multivariate effects of repetition on fMRI data (Alink et al., 2018). This is not inconsistent with suppression from higher-order brain regions of those neurons encoding prediction error, as according to the PIMMS framework). However, the important implication of PIMMS, if correct, is that the regions showing maximal RS may not be the regions where the critical dimensions of interest are represented - they may be downstream of the critical regions (or given repetition causes synaptic changes between all regions in this model, the representations may not be stored in any one, single region).

Using Repetition Suppression to study Neural Bases of Face Processing

Much is known about how humans perceive faces, and much is known about neural responses to faces in nonhuman primates. One way to map the neuroanatomy of face processing in humans is to use the technique of fMRI repetition suppression (RS). I began by contrasting repetition effects for familiar and unfamiliar faces, assuming that only the former would have long-term perceptual representations (e.g, FRUs in the Bruce & Young model). In fact, we found RS for familiar (famous) faces, but repetition enhancement (RE) for unfamiliar (previously-unseen) faces in face-responsive regions. A similar interaction between familiarity (pre-experimental exposure) and repetition (experimental exposure) was found for other stimuli such as symbols, prompting us to suggest that RS reflects modification of existing representations, whereas RE reflects the (initial) formation of new representations (Henson et al, 2000).

Subsequent experiments showed this story cannot be so simple, particularly given that fMRI RS appears highly sensitive to the task (Henson et al, 2002) and the lag between repetitions (Henson et al, 2004a), among other factors (see above section). Indeed, RE is not always seen for unfamiliar faces; sometimes (particularly for immediate repetitions with no intervening items) unfamiliar faces show RS (Henson et al, 2004b) (though a consistent pattern across six experiments using long-lag repetition is greater RS for familiar than unfamiliar faces (Henson et al, 2000; 2002; 2004b; Henson et al 2003; Thiel et al, 2002; Kouider et al, 2009).

Switching to immediate repetition paradigms, we have continued to use RS to explore the sensitivity of different brain regions to different aspects of faces, concentrating on the fusiform face area (FFA), occipital face area (OFA) and superior temporal sulcus (STS). For example, with we found that FFA is sensitive to identity but not expression changes, while STS is sensitive to expression but not identity changes (Winston et al, 2004); that FFA appears to reflect the categorical perception of identity (using morphs of familiar faces), whereas OFA is more sensitive to image properties (Rotsthein et al, 2005). Furthermore, a more anterior FFA region showed some generalisation of RS across different photographs of the same person, but only when that person is familiar, whereas RS in more posterior fusiform and occipital regions was sensitive to the specific photograph (Eger et al, 2005). Finally, a recent long-duration adaptation paradigm implicated a more anterior STS region in gaze perception (Calder et al, 2007). The above results are generally consistent with popular models that postulate parallel routes for processing identity (FFA) and expression/eye-gaze (STS), but sharing an initial stage of structural encoding (OFA). Our more recent work however shows that activity in these regions also depends on featural versus configural processing strategies (Cohen-Kadosh et al, 2009); and note that some of these fMRI RS results may be specific to short-lags (e.g, reflect the influence of a short-lived visual iconic store, or "pictorial codes" in the Bruce & Young model). We also explored the effects of face repetition on effective connectivity using Dynamic Causal Modelling (DCM) applied to the Wakeman fMRI dataset (Lee et al., 2022).

Elias Mouchlianitis and I also tested possible laterality differences in visual-object representations in ventral temporal regions by using divided visual field presentation of faces, and testing priming within versus across different views. We replicated the well-known right-hemisphere advantage in face-processing, but found little evidence for interactions between hemisphere (visual field) and effects of view on priming. We did however find hemispheric differences in priming (regardless of view), when crossing hemifield of prime and probe, and explored this further with MEG and fMRI; for more details, see Elias's PhD Thesis.

Finally, some of our methodological work has implications for the N/M170 component associated with face-processing, such as its dependence on both power increase and phase-locking (Henson et al, 2005), and its likely multiple generation in left and right FFA and OFA, as well as right STS, based on both multimodal approaches to M/EEG source localisation (Henson et al, 2009) and M/EEG in a patient with OFA/FFA lesions (Alonso-Prieto et al, 2011).

The Philosophy of Functional Neuroimaging (Neophrenology?)

Many people have criticised functional neuroimaging as "neophrenology", in reference to the field of phrenology from the C19th, which is now largely ignored (see book by William Uttal). I (among others, eg Tim Shallice) have argued that this is not the case. More specifically, I have argued that functional neuroimaging does not only seek to "localise" functions in the brain, but can also inform psychological-level theories (Henson, 2005). For example, in relation to the debate concerning different memory systems (above), I would argue that functional neuroimaging provides additional data that can inform the debate between, eg single- vs dual-process models of recognition memory (a debate that has so far proven difficult to resolve on the basis of behavioural data alone). In particular, I distinguished two types of psychological inference one can make from fMRI data, which have become known more succinctly as "forward" and "reverse" inference (the latter introduced by Russ Poldrack), related to the simple ideas of "dissociations" and "associations". However, such inferences still require additional "bridging" assumptions (much like the field of cognitive neuropsychology), and certainly more thought than is typically given in functional neuroimaging papers. For example, I argued that the claim of "qualitatively" different patterns of activity over the brain, necessary for a "forward inference", requires criteria more stringent than even a double-dissociation between brain regions and experimental conditions; criteria that resemble the "reversed associations" promoted in psychological research (Henson, 2006a). More recently, in a commentary on a detailed methodological review by Sternberg, I expressed these criteria more formally, in terms of "state-trace" analysis (Henson, 2011). Using state-trace analysis of intracranial ERP data from hippocampus and perirhinal cortex, we were the first to report definitive evidence for more than one memory process occurring across these two medial temporal lobe regions (Staresina et al, 2013).

Max Coltheart is one of a number of researchers who have questioned whether functional neuroimaging has yet told us anything new about psychological theories, and critiqued some of the examples I gave in the 2005 paper above (see also papers by Mike Page). In my reply (Henson, 2006b), I pointed out that his criticisms nearly always related to psychological issues in the design of the imaging experiments, and not the more theoretical question of whether a perfectly designed (from the psychological perspective) imaging experiment could ever be informative. I also questioned whether some of his examples of theoretically important conclusions from behavioural data were not open to the same questions, and pondered the more fundamental question about whether experimental dissociations and fractionation are appropriate for systems as complex and nonlinear as the brain (see also Henson, 2011).

On a more specific issue, I have argued together with Karl Friston against the use of functional localiser sessions to identify regions of interest (fROIs) over which the BOLD signal is averaged (Friston et al, 2006; Friston & Henson, 2006). Note that we did not argue against the functional definition of regions per se (nor did we argue that such regions should be not be defined on individual basis; cf. Saxe et al, 2006); only that fROIs are normally better identified by orthogonal contrasts within the same experimental session, and that they may be better used as search volumes in order to allow for functional heterogeneity within an fROI.

Open Science

We are aspiring towards more Open Science, with the help of the CBU Open Science Committee (http://www.mrc-cbu.cam.ac.uk/openscience/). We strive to ensure easy access to our data, through a managed access system to ensure we honour the consent given by our volunteers, either for CBU papers (http://www.mrc-cbu.cam.ac.uk/publications/opendata) or CamCAN (http://www.cam-can.org/index.php?content=dataset). We have also written our first opinion piece, on how to improve the abstract submission process for conferences (Tibon et al, 2018; see also Brouwers et al., 2021). Together with the British Neuroscience Society (BNA), we have explored opinions on publishing (Clift et al., 2021). We have also contributed to international guidelines for both fMRI (Poldrack et al, 2008) and MEG (Gross et al, 2013), including the definition of the BIDS standard for representing MEG data (Niso et al, 2018).

Methodological Developments in MRI, fMRI, EEG/MEG Analysis

Our early methodological work was inspired by Karl Friston and the Statistical Parametric Mapping (SPM) group. This coincided with the advent of event-related fMRI, including issues like how to model the neural-haemodynamic mapping (Josephs & Henson, 1999), how to model the BOLD impulse response (for which three degrees of freedom seem sufficient; Henson et al, 2000; Henson et al, 2001; Henson, 2004), how to accommodate different slice acquisition times (Henson et al, 1999), how to characterise BOLD impulse response latency (Henson & Rugg, 2001; Friston et al, 2002), how to record and model speech in the fMRI scanner (Henson & Josephs, 2002) and evaluating the importance of optical head-tracking for multivariate pattern analysis (Huang et al., 2018).

A particular interest has been in how to optimise experimental designs for fMRI (Josephs & Henson, 1999; Friston et al, 1999; Mechelli et al, 2003a; Mechelli et al, 2003b), summarised in this webpage: http://imaging.mrc-cbu.cam.ac.uk/imaging/DesignEfficiency. More recently, we explored the implications of trial-level variability for the efficiency of designs for trial-based connectivity and multivoxel pattern analysis (Abdulrahman & Henson, 2016).

A lot of the above ideas can also be found in chapters of various books, such as design efficiency (Henson, 2015a; Henson, 2006), linear convolution models (Henson & Friston, 2006) and Analysis of Variance Henson, 2015b; Penny & Henson, 2006a; Penny & Henson, 2006b). We have also written about more general issues such as new new neuroimaging methods for studying memory (Greve & Henson, 2015) future of neuroimaging (Williams & Henson, 2018).

We have also contributed several example datasets, such as a multimodal dataset (fMRI, MRI, DTI, MEG, EEG) on face perception (Wakeman & Henson, 2015) which is available on OpenNeuro. Others are available on the SPM website and used for various chapters of the SPM manual. Other technical notes are available about ANOVAs (Henson & Penny 2003) and single-case studies (Henson, 2006), while code is available on my website or github.

We helped extend SPM to the analysis of EEG and MEG data. This concerns the use of Random Field Theory to correct for multiple comparisons in statistical maps across space-time (Henson et al, 2008) or frequency-time (Henson et al, 2005), and distributed solutions to the inverse problem (reconstruction of the cortical sources of EEG/MEG data), based on Karl Friston's Parametric Empirical Bayesian (PEB) framework. This includes how to localise induced as well as evoked responses (Friston et al, 2006), and how to incorporate multiple priors (regularisation terms) (Henson et al, 2007), to the extent that hundreds of sparse priors can be used (Friston et al, 2008). I have also used the PEB framework to explore forward models for MEG (Henson et al, 2009; Mattout et al, 2007) and to pool information across multiple forward models (subjects) when performing group-inversion (Henson et al, 2011). This has included contributing to the SPM code (e.g, as reviewed, for SPM8, in Litvak et al, 2011). With Olaf Hauk, we have also explored metrics for evaluating distributed inverse solutions (Hauk et al, 2011) and adaptive parcellation for optimising source space (Farahibozorg et al, 2018).

We have applied the PEB framework to multimodal integration, such as the symmetric integration (fusion) of MEG and EEG (Henson et al, 2009) and the asymmetric integration of M/EEG and fMRI (Henson et al, 2010), as reviewed in Henson et al (2011). I am also interested in the theoretical relationship between M/EEG and fMRI (Kilner et al, 2005), and in the use of Dynamic Causal Modelling (DCM) to study effective connectivity between cortical sources reconstructed from MEG/EEG (e.g, Chen et al, 2009; Henson et al, 2012), including issues of model recovery and Bayesian model evidence (Litvak et al, 2019). We also explored the effectiveness of different means of de-facing MRIs for data-sharing, particularly for coregistration with MEG (Bruna et al., 2022).

Together with Linda Geerligs, we investigated the effects of ageing on fMRI functional connectivity, including the complex relationship between age, vascular health, head movement and fMRI connectivity (Geerligs et al, 2017), the difficulty of measuring group differences in dynamic fMRI connectivity (Lehmann et al, 2017) and the state-dependence of age-related changes in fMRI connectivity (Geerligs et al., 2015). We also introduced a new method for measuring multi-dimensional fMRI connectivity between regions-of-interest (Geerligs et al, 2016), which has many potential applications, including being more robust to ageing than traditional univariate measures (for review, see Basti et al., 2021).

I have also done some work on voxel-based morphometry (VBM), particularly as applied to ageing (Good et al., 2001), and how to adjust for global changes with age (Peelle et al., 2012). We have also worked on pipelines for analysing large, multimodal datasets such as CamCAN (Taylor et al., 2017), and contributed to work showing the importance for statistical power of longer lags rather than larger samples (Vidal-Pineiro et al., 2025). We have also compared the advantages and disadvantages of multi-echo and multi-band sequences for fMRI (Halai et al., 2025) and of different tensor models of diffusion MRI (Henriques et al., 2023).

Finally, I have contributed to international guidelines for both fMRI (Poldrack et al, 2008) and MEG (Gross et al, 2013), including the definition of the BIDS standard for representing MEG data (Niso et al, 2018).

Short-term Memory for Serial Order

My past research, stemming from my PhD (http://www.mrc-cbu.cam.ac.uk/people/rik.henson/personal/thesis.html) concerns short-term memory, in particular memory for serial order (e.g, the order of digits in a novel telephone number). This involved finding stricter empirical evidence against previous "chaining" models (Henson et al, 1996) and development of a new computational model - the "Start-End Model" (Henson, 1998a) - together with empirical tests of its predictions (Henson, 1999a) (for which I was awarded the first BPS prize for doctoral research, Henson, 2001). Two key issues in this model relate to the representation of position within a sequence, which appears to be relative to the start and end of that sequence (Henson, 1999b) and the representation of repeated items, for which effects like the Ranschburg effect support both type and token representation (Henson, 1998b). Some of the ideas were extended to examine the effects of ageing (Maylor & Henson, 2000) and development (McCormack et al, 2000) on short-term memory.

Together with Neil Burgess and Graham Hitch, we considered how relative position within a sequence could be coded by temporal oscillators (Henson & Burgess, 1997), and performed some behavioural tests for the existence of such oscillators (Henson et al, 2003). We also considered how such a model of short-term memory for serial order might map onto the brain using fMRI (Henson et al, 2000).

During my MSc, I also briefly examined the short-term memory capacities of auto-associative neural networks (palimpsests) together with David Willshaw (Henson, 1993; Henson & Willshaw, 1995).

MRC Cognition and Brain Sciences Unit

MRC Cognition and Brain Sciences Unit